Forward and Backward Pass in a Simple Neural Network¶

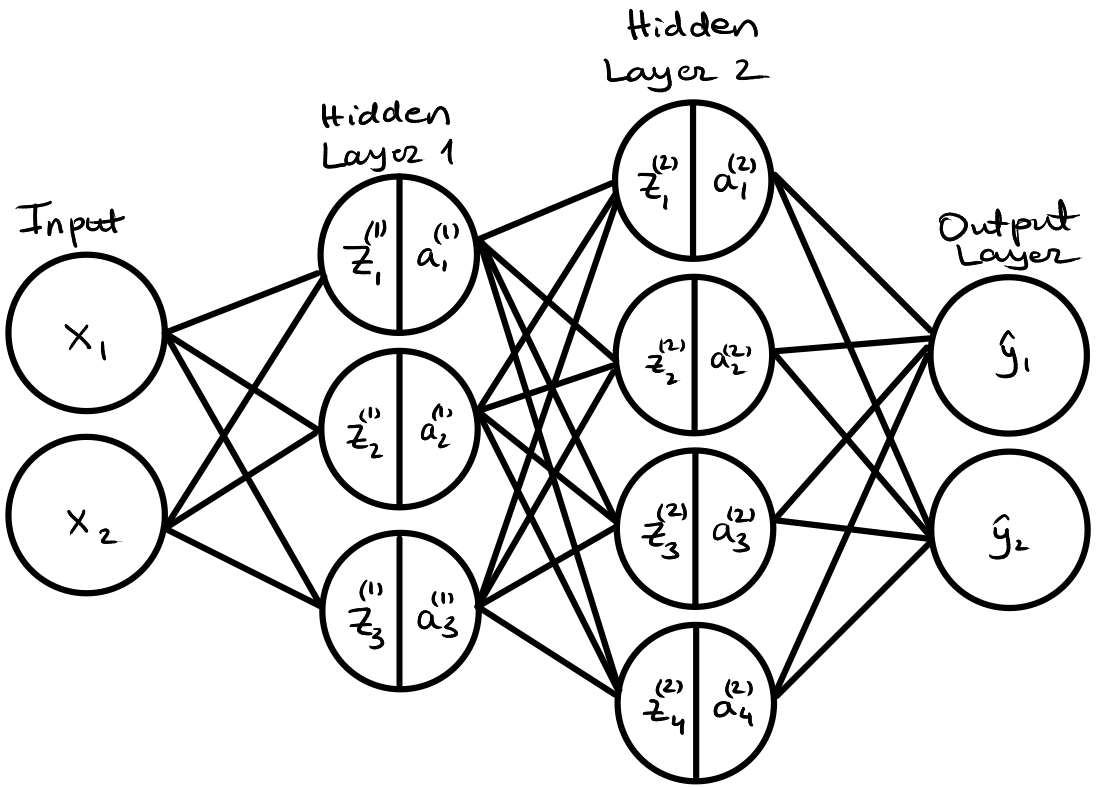

Below is an example of a simple neural network with two-dimensional inputs, two hidden layers with 3 and 4 neurons respectively, and two-dimensional outputs. This example goes through the forward and backward passes, including mini-batches.

The subscripts are indices of a matrix or a vector, while superscripts indicate the layer. For example, in $w^{(1)}_{21}$, the superscript indicates that the weight is for a neuron in Layer 1, and the subscript indicates that it is the weight associated with the 2nd neuron $a^{(1)}_2$ and the 1st input $x_1$.

Forward Pass¶

Layer 1 (Hidden Layer)¶

$$ z_i^{(1)} = b_i^{(1)} + \sum_j w_{ij}^{(1)} x_j \\ a^{(1)}_i = \tanh(z^{(1)}_i) $$

$$ z_1^{(1)} = (w_{11}^{(1)}x_1 + w_{12}^{(1)}x_2 + b_1^{(1)}) \\ z_2^{(1)} = (w_{21}^{(1)}x_1 + w_{22}^{(1)}x_2 + b_2^{(1)}) \\ z_3^{(1)} = (w_{31}^{(1)}x_1 + w_{32}^{(1)}x_2 + b_3^{(1)}) \\ a_1^{(1)} = \tanh{(z_1^{(1)})} \\ a_2^{(1)} = \tanh{(z_2^{(1)})} \\ a_3^{(1)} = \tanh{(z_3^{(1)})} \\ $$

Layer 2 (Hidden Layer)¶

$$ z_k^{(2)} = b_k^{(2)} + \sum_i w_{ki}^{(2)} a^{(1)}_i \\ a^{(2)}_k = \tanh(z^{(2)}_k) $$

$$ z_1^{(2)} = (w_{11}^{(2)}a_1^{(1)} + w_{12}^{(2)}a_2^{(1)} + w_{13}^{(2)}a_3^{(1)} + b_1^{(2)}) \\ z_2^{(2)} = (w_{21}^{(2)}a_1^{(1)} + w_{22}^{(2)}a_2^{(1)} + w_{23}^{(2)}a_3^{(1)} + b_2^{(2)}) \\ z_3^{(2)} = (w_{31}^{(2)}a_1^{(1)} + w_{32}^{(2)}a_2^{(1)} + w_{33}^{(2)}a_3^{(1)} + b_3^{(2)}) \\ z_4^{(2)} = (w_{41}^{(2)}a_1^{(1)} + w_{42}^{(2)}a_2^{(1)} + w_{43}^{(2)}a_3^{(1)} + b_4^{(2)}) \\ a_1^{(2)} = \tanh{(z_1^{(2)})} \\ a_2^{(2)} = \tanh{(z_2^{(2)})} \\ a_3^{(2)} = \tanh{(z_3^{(2)})} \\ a_4^{(2)} = \tanh{(z_4^{(2)})} \\ $$

Layer 3 (Output Layer)¶

$$ \hat{y}_l = b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \\ $$

$$ \hat{y}_1 = w^{(3)}_{11}a_1^{(2)} + w^{(3)}_{12}a_2^{(2)} + w^{(3)}_{13}a_3^{(2)} + w^{(3)}_{14}a_4^{(2)} + b_1^{(3)} \\ \hat{y}_2 = w^{(3)}_{21}a_1^{(2)} + w^{(3)}_{22}a_2^{(2)} + w^{(3)}_{23}a_3^{(2)} + w^{(3)}_{24}a_4^{(2)} + b_2^{(3)} \\ $$

Mean Squared Error Loss Function¶

The simplest loss function we can use here is the mean squared error (MSE) loss function

$$ L = \sum_l (\hat{y}_l - y_l)^2 $$

Backward Pass¶

Model parameters¶

In the example neural network, we have 35 parameters in total - 6 weights and 3 biases for Layer 1, 12 weights and 4 biases for Layer 2, and 8 weights and 2 biases for Layer 3. We now need to find how to update these weights in order to minimize the loss function. To do so, we will need to find the partial derivatives. Note that for now, we are sticking to a single-sample case; we will discuss batches after this.

$$ \frac{\partial L}{\partial w^{(1)}_{mn}}, \frac{\partial L}{\partial b^{(1)}_{m}} \frac{\partial L}{\partial w^{(2)}_{mn}}, \frac{\partial L}{\partial b^{(2)}_{m}} \frac{\partial L}{\partial w^{(3)}_{mn}}, \frac{\partial L}{\partial b^{(3)}_{m}} $$

Note that the ranges for the subscripts $m$ and $n$ depend on the size of the layers.

$\frac{\partial L}{\partial b^{(3)}_{m}}$¶

$$ \frac{\partial L}{\partial b^{(3)}_{m}} = \frac{\partial}{\partial b^{(3)}_{m}} \sum_l (\hat{y}_l - y_l)^2 = \sum_l \frac{\partial}{\partial b^{(3)}_{m}} (\hat{y}_l - y_l)^2 \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial b^{(3)}_{m}} \hat{y}_l \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial b^{(3)}_{m}} \left[ b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[ \frac{\partial}{\partial b^{(3)}_{m}}b_l^{(3)} + \frac{\partial}{\partial b^{(3)}_{m}}\sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \delta_{ml} \\[.2cm] = 2(\hat{y}_m - y_m) $$

where $\delta_{ml} = 1 \space \text{if} \space m=l \space \text{else} \space 0$.

$\frac{\partial L}{\partial w^{(3)}_{mn}}$¶

$$ \frac{\partial L}{\partial w^{(3)}_{mn}} = \frac{\partial}{\partial w^{(3)}_{mn}} \sum_l (\hat{y}_l - y_l)^2 = \sum_l \frac{\partial}{\partial w^{(3)}_{mn}} (\hat{y}_l - y_l)^2 \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial w^{(3)}_{mn}} \hat{y}_l \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial w^{(3)}_{mn}} \left[ b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[\frac{\partial}{\partial w^{(3)}_{mn}}b_l^{(3)} + \frac{\partial}{\partial w^{(3)}_{mn}}\sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k \frac{\partial}{\partial w^{(3)}_{mn}} \left[w^{(3)}_{lk}a_k^{(2)}\right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k \delta_{ml} \delta_{nk} a^{(2)}_k \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \delta_{ml} \sum_k \delta_{nk} a^{(2)}_k \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \delta_{ml} a^{(2)}_n \\[.2cm] = 2(\hat{y}_m - y_m) a^{(2)}_n $$

$\frac{\partial L}{\partial b^{(2)}_{m}}$¶

$$ \frac{\partial L}{\partial b^{(2)}_{m}} = \frac{\partial}{\partial b^{(2)}_{m}} \sum_l (\hat{y}_l - y_l)^2 = \sum_l \frac{\partial}{\partial b^{(2)}_{m}} (\hat{y}_l - y_l)^2 \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial b^{(2)}_{m}} \hat{y}_l \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial b^{(2)}_{m}} \left[ b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[ \frac{\partial}{\partial b^{(2)}_{m}} b_l^{(3)} + \frac{\partial}{\partial b^{(2)}_{m}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[\frac{\partial}{\partial b^{(2)}_{m}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k \frac{\partial}{\partial b^{(2)}_{m}} \left[ w^{(3)}_{lk}a_k^{(2)} \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial}{\partial b^{(2)}_{m}} a_k^{(2)} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial a_k^{(2)}}{\partial z_k^{(2)}} \frac{\partial z_k^{(2)}}{\partial b^{(2)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial z_k^{(2)}}{\partial b^{(2)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial}{\partial b^{(2)}_{m}} \left[ b_k^{(2)} + \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \left[ \frac{\partial}{\partial b^{(2)}_{m}} b_k^{(2)} + \frac{\partial}{\partial b^{(2)}_{m}} \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \delta_{mk} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) w^{(3)}_{lm} \left (1-\tanh \left(z_m^{(2)}\right )^2 \right) $$

$\frac{\partial L}{\partial w^{(2)}_{mn}}$¶

$$ \frac{\partial L}{\partial w^{(2)}_{mn}} = \frac{\partial}{\partial w^{(2)}_{mn}} \sum_l (\hat{y}_l - y_l)^2 = \sum_l \frac{\partial}{\partial w^{(2)}_{mn}} (\hat{y}_l - y_l)^2 \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial w^{(2)}_{mn}} \hat{y}_l \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial w^{(2)}_{mn}} \left[ b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[ \frac{\partial}{\partial w^{(2)}_{mn}} b_l^{(3)} + \frac{\partial}{\partial w^{(2)}_{mn}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[\frac{\partial}{\partial w^{(2)}_{mn}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k \frac{\partial}{\partial w^{(2)}_{mn}} \left[ w^{(3)}_{lk}a_k^{(2)} \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial}{\partial w^{(2)}_{mn}} a_k^{(2)} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial a_k^{(2)}}{\partial z_k^{(2)}} \frac{\partial z_k^{(2)}}{\partial w^{(2)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial z_k^{(2)}}{\partial w^{(2)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial}{\partial w^{(2)}_{mn}} \left[ b_k^{(2)} + \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \left[ \frac{\partial}{\partial w^{(2)}_{mn}} b_k^{(2)} + \frac{\partial}{\partial w^{(2)}_{mn}} \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \left[ \frac{\partial}{\partial w^{(2)}_{mn}} \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i \frac{\partial}{\partial w^{(2)}_{mn}} \left[ w_{ki}^{(2)} a^{(1)}_i \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i \delta_{mk} \delta_{ni} a^{(1)}_i \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \delta_{mk} \sum_i \delta_{ni} a^{(1)}_i \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \delta_{mk} a^{(1)}_n \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) w^{(3)}_{lm} \left (1-\tanh \left(z_m^{(2)}\right )^2 \right) a^{(1)}_n $$

$\frac{\partial L}{\partial b^{(1)}_{m}}$¶

$$ \frac{\partial L}{\partial b^{(1)}_{m}} = \frac{\partial}{\partial b^{(1)}_{m}} \sum_l (\hat{y}_l - y_l)^2 = \sum_l \frac{\partial}{\partial b^{(1)}_{m}} (\hat{y}_l - y_l)^2 \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial b^{(1)}_{m}} \hat{y}_l \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial b^{(1)}_{m}} \left[ b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[ \frac{\partial}{\partial b^{(1)}_{m}} b_l^{(3)} + \frac{\partial}{\partial b^{(1)}_{m}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[\frac{\partial}{\partial b^{(1)}_{m}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k \frac{\partial}{\partial b^{(1)}_{m}} \left[ w^{(3)}_{lk}a_k^{(2)} \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial}{\partial b^{(1)}_{m}} a_k^{(2)} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial a_k^{(2)}}{\partial z_k^{(2)}} \frac{\partial z_k^{(2)}}{\partial b^{(1)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial z_k^{(2)}}{\partial b^{(1)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial}{\partial b^{(1)}_{m}} \left[ b_k^{(2)} + \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \left[ \frac{\partial}{\partial b^{(1)}_{m}} b_k^{(2)} + \frac{\partial}{\partial b^{(1)}_{m}} \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial}{\partial b^{(1)}_{m}} \sum_i w_{ki}^{(2)} a^{(1)}_i\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i \frac{\partial}{\partial b^{(1)}_{m}} \left[w_{ki}^{(2)} a^{(1)}_i \right ] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \frac{\partial a^{(1)}_i}{\partial b^{(1)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \frac{\partial a^{(1)}_i }{\partial z^{(1)}_i} \frac{\partial z^{(1)}_i}{\partial b^{(1)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \frac{\partial z^{(1)}_i}{\partial b^{(1)}_{m}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \frac{\partial}{\partial b^{(1)}_{m}} \left[ b_i^{(1)} + \sum_j w_{ij}^{(1)} x_j \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \left[ \frac{\partial}{\partial b^{(1)}_{m}} b_i^{(1)} + \frac{\partial}{\partial b^{(1)}_{m}} \sum_j w_{ij}^{(1)} x_j \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \delta_{mi} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) w_{km}^{(2)} \left( 1-\tanh\left( z^{(1)}_m \right)^2 \right) $$

$\frac{\partial L}{\partial w^{(1)}_{mn}}$¶

$$ \frac{\partial L}{\partial w^{(1)}_{mn}} = \frac{\partial}{\partial w^{(1)}_{mn}} \sum_l (\hat{y}_l - y_l)^2 = \sum_l \frac{\partial}{\partial w^{(1)}_{mn}} (\hat{y}_l - y_l)^2 \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial w^{(1)}_{mn}} \hat{y}_l \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \frac{\partial}{\partial w^{(1)}_{mn}} \left[ b_l^{(3)} + \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[ \frac{\partial}{\partial w^{(1)}_{mn}} b_l^{(3)} + \frac{\partial}{\partial w^{(1)}_{mn}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \left[\frac{\partial}{\partial w^{(1)}_{mn}} \sum_k w^{(3)}_{lk}a_k^{(2)} \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k \frac{\partial}{\partial w^{(1)}_{mn}} \left[ w^{(3)}_{lk}a_k^{(2)} \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial}{\partial w^{(1)}_{mn}} a_k^{(2)} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \frac{\partial a_k^{(2)}}{\partial z_k^{(2)}} \frac{\partial z_k^{(2)}}{\partial w^{(1)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial z_k^{(2)}}{\partial w^{(1)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial}{\partial w^{(1)}_{mn}} \left[ b_k^{(2)} + \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \left[ \frac{\partial}{\partial w^{(1)}_{mn}} b_k^{(2)} + \frac{\partial}{\partial w^{(1)}_{mn}} \sum_i w_{ki}^{(2)} a^{(1)}_i \right]\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \frac{\partial}{\partial w^{(1)}_{mn}} \sum_i w_{ki}^{(2)} a^{(1)}_i\\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i \frac{\partial}{\partial w^{(1)}_{mn}} \left[w_{ki}^{(2)} a^{(1)}_i \right ] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \frac{\partial a^{(1)}_i}{\partial w^{(1)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \frac{\partial a^{(1)}_i }{\partial z^{(1)}_i} \frac{\partial z^{(1)}_i}{\partial w^{(1)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \frac{\partial z^{(1)}_i}{\partial w^{(1)}_{mn}} \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \frac{\partial}{\partial w^{(1)}_{mn}} \left[ b_i^{(1)} + \sum_j w_{ij}^{(1)} x_j \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \left[ \frac{\partial}{\partial w^{(1)}_{mn}} b_i^{(1)} + \frac{\partial}{\partial w^{(1)}_{mn}} \sum_j w_{ij}^{(1)} x_j \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \frac{\partial}{\partial w^{(1)}_{mn}} \sum_j w_{ij}^{(1)} x_j \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \sum_j \frac{\partial}{\partial w^{(1)}_{mn}} \left[w_{ij}^{(1)} x_j \right] \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \sum_j \delta_{mi} \delta_{nj} x_j \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \delta_{mi} \sum_j \delta_{nj} x_j \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) \sum_i w_{ki}^{(2)} \left( 1-\tanh\left( z^{(1)}_i \right)^2 \right) \delta_{mi} x_n \\[.2cm] = \sum_l 2(\hat{y}_l - y_l) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_k^{(2)}\right )^2 \right) w_{km}^{(2)} \left( 1-\tanh\left( z^{(1)}_m \right)^2 \right) x_n $$

Batch dimensions¶

In practice, we can feed multiple samples $\mathbf{x}_t$ through the network at the same time during training. This collection of $t$ samples is known as a mini-batch. The loss function for the mini-batch with $B$ number of samples in a mini-batch is defined as

$$ L_{batch} = \frac{1}{B} \sum_{t=1}^B L_t = \frac{1}{B}\sum_{t=1}^B \sum_{l} (\hat{y}_{l, t} - y_{l, t})^2 $$

Essentially, the loss is then the average of the losses for all $t$ samples. Similarly, the partial derivative with respect to any model parameter $\theta$ is also averaged over the samples:

$$ \frac{\partial L_{batch}}{\partial \theta} = \frac{\partial}{\partial \theta} \left[ \frac{1}{B} \sum_{t=1}^B L_t \right] = \frac{1}{B} \sum_{t=1}^B \frac{\partial L_t}{\partial \theta} $$

Parameter partial derivatives with mini-batch¶

Layer 3 (Output Layer)¶

$$ \frac{\partial L_{batch}}{\partial b^{(3)}_m} = \frac{1}{B} \sum_{t=1}^B 2(\hat{y}_{m, t} - y_{m, t}) $$

where $\hat{y}_{m, t}$ would be the model prediction for $\mathbf{x}_t$ for neuron $m$ in the output layer.

$$ \frac{\partial L_{batch}}{\partial w^{(3)}_{mn}} = \frac{1}{B} \sum_{t=1}^B 2(\hat{y}_{m,t} - y_{m,t}) a^{(2)}_{n,t} $$

Layer 2 (Hidden Layer)¶

$$ \frac{\partial L_{batch}}{\partial b^{(2)}_{m}} = \frac{1}{B} \sum_{t=1}^B \sum_l 2(\hat{y}_{l,t} - y_{l,t}) w^{(3)}_{lm} \left (1-\tanh \left(z_{m,t}^{(2)}\right )^2 \right) $$

$$ \frac{\partial L_{batch}}{\partial w^{(2)}_{mn}} = \frac{1}{B} \sum_{t=1}^B \sum_l 2(\hat{y}_{l,t} - y_{l,t}) w^{(3)}_{lm} \left (1-\tanh \left(z_{m,t}^{(2)}\right )^2 \right) a^{(1)}_{n, t} $$

Layer 1 (Hidden Layer)¶

$$ \frac{\partial L_{batch}}{\partial b^{(1)}_{m}} = \frac{1}{B} \sum_{t=1}^B \sum_l 2(\hat{y}_{l,t} - y_{l,t}) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_{k,t}^{(2)}\right )^2 \right) w_{km}^{(2)} \left( 1-\tanh\left( z^{(1)}_{m,t} \right)^2 \right) $$

$$ \frac{\partial L_{batch}}{\partial w^{(1)}_{mn}} = \frac{1}{B} \sum_{t=1}^B \sum_l 2(\hat{y}_{l,t} - y_{l,t}) \sum_k w^{(3)}_{lk} \left (1-\tanh \left(z_{k,t}^{(2)}\right )^2 \right) w_{km}^{(2)} \left( 1-\tanh\left( z^{(1)}_{m,t} \right)^2 \right) x_{n,t} $$